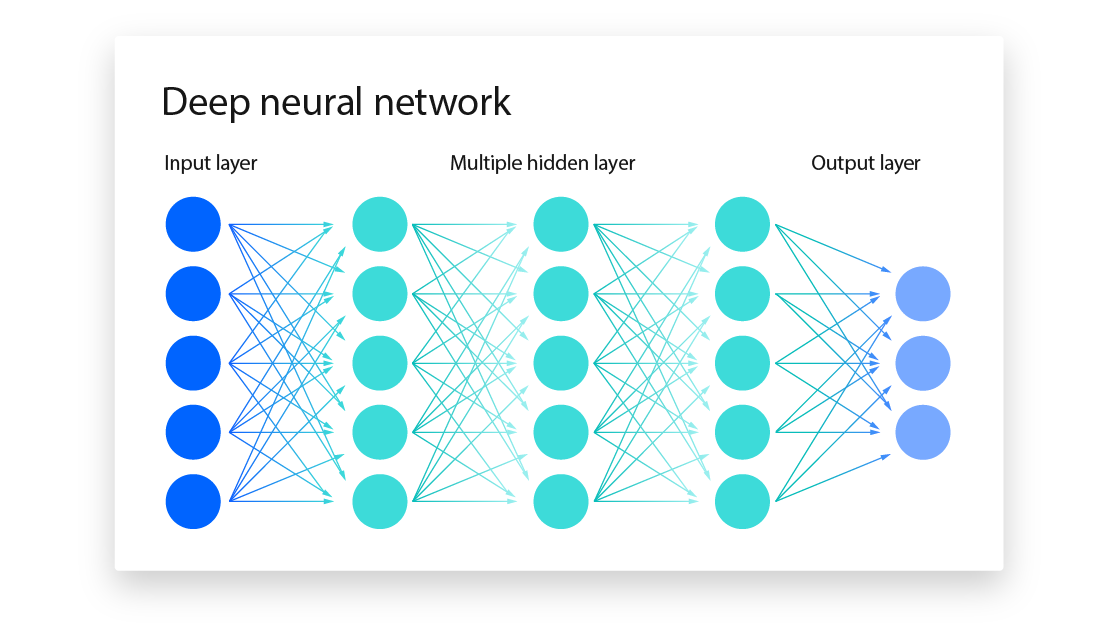

This repository contains implementations and examples of various neural network architectures and machine learning algorithms. From fundamental feedforward networks to advanced convolutional and recurrent models, this collection serves as a practical resource for understanding and applying machine learning concepts.

- What is the role of mini-batch training in neural networks

- What is the difference between a supervised and unsupervised learning algorithm?

- What is the concept of a state-value function in reinforcement learning

- What is the purpose of the Kullback-Leibler (KL) divergence loss function

- Explain the concept of early stopping in neural network training

- What is the vanishing gradient problem in Neural Network

-

Diverse Implementations: Explore a wide range of neural network architectures, including feedforward, convolutional, and recurrent networks, along with popular machine learning algorithms.

-

Comprehensive Examples: Find detailed examples and use cases for each implemented model, demonstrating their application in various domains such as computer vision, natural language processing, and more.

-

Modular and Extensible: Each implementation is designed with modularity in mind, making it easy to adapt and extend for specific tasks or research projects.

-

Detailed Documentation: Extensive documentation accompanies each implementation, providing insights into the architecture, hyperparameters, and recommended use cases.

-

Performance Benchmarks: Compare the performance of different models on benchmark datasets, enabling easy evaluation and selection of appropriate architectures for specific tasks.

git clone https://github.com/yourusername/Neural-Network-and-Machine-Learning.git

```bash

# Clone the Repository

git clone https://github.com/aw-junaid/Neural-Network-and-Machine-Learning.git

# Install Dependencies

pip install -r requirements.txtFor support, please open an issue or reach out to abdulwahabjunaid07@gmail.com.